- #DENOISER 3 CUDA HOW TO#

- #DENOISER 3 CUDA DRIVERS#

- #DENOISER 3 CUDA DRIVER#

- #DENOISER 3 CUDA CODE#

- #DENOISER 3 CUDA WINDOWS#

The speed increase was marginal (17spp) and the visual difference imperceptible.Īt this point, it was time to use the 11 other cores on the Ryzen 5 2600. If Clang is able to generate SIMD instructions via auto-vectorization, I found the feature capricious to trigger. Typedef float F typedef int I struct V// Andrew KenslerĪdding colors to outline the different parts helps to understand that it is in fact very simple with seven sections based on a Pixel Sampler with RayMarches into a Database.

#DENOISER 3 CUDA CODE#

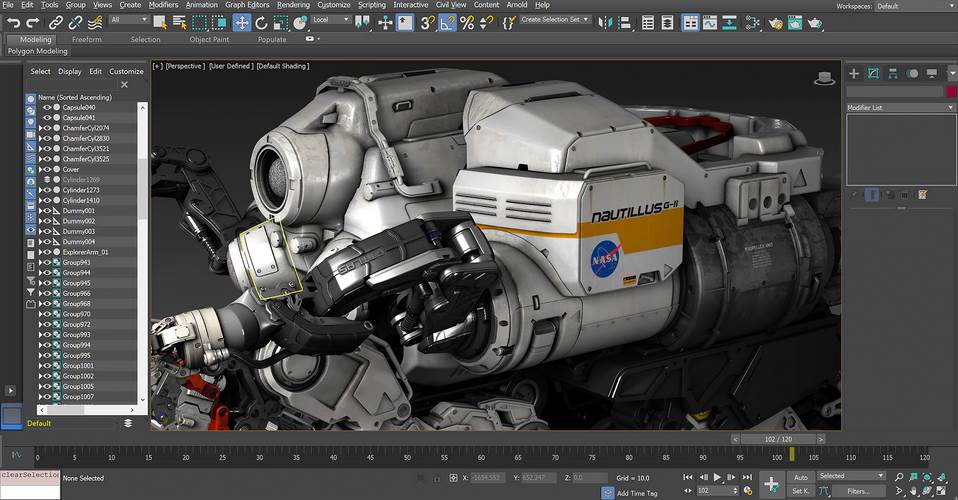

In the end, despite skepticism, I gave a try to OptiX denoiser and it blew my mind.Īt first sight, the postcard pathtracer code ( pixar.cpp) is a tad intimidating. Starting with compiler flags, then moving to SIMD, multi-threading, and GPU rendering via CUDA. The hardware used was a DB4 cube containing a Ry(6 cores, 12 threads at 3.5Ghz) with an Nvidia Pascal based GTX 1050 Ti GPU. The name of the game was to maximize the samples per pixel (spp) at resolution 960x540. Instead of attempting to run as fast as possible, I decided on a time budget (1mn) and resolved to generate the best image possible. This twist allowed me to change the rules of my self-imposed game.

The quality of the image is directly correlated with how many rays are cast per pixel.

Because it is a pathtracer and not a raytracer, it is more computationally intensive. I spent a lot of time trying to make sense of that code back in 2018 and I knew it was going to be a bigger challenge. I enjoyed it so much that I decided to revisit Andrew's follow up, the postcard sized pathtracer. It was a lot of fun and I learned a lot in the process. Last week, I revisited Andrew Kensler's business card raytracer to make it run as fast as possible.

#DENOISER 3 CUDA DRIVERS#

The drivers 442.50 and newer should behave the same so far, but this can change in future versions.Revisiting the postcard pathtracer with CUDA and Optix

#DENOISER 3 CUDA DRIVER#

Right, the R440 driver branch up to version 442.50 contained a different training network which was improved in that release and later versions. It’s pretty big already, There actually isn’t even a separate training network for the LDR mode anymore to make it smaller.

#DENOISER 3 CUDA HOW TO#

Yes, the AI denoiser can have differently trained networks or algorithms in different drivers with the goal to improve the results and performance.Īnd if so, is there some way how to store / extract the model used for one particular version of drivers so that I could pass it always to the optixDenoiserSetModel? If so is the difference between drivers caused by different default model passed to the optixDenoiserSetModel when data = NULL? Yes, the OptiX AI denoiser lives in the driver since OptiX 6.5.0.

Is this expected to happen with different versions of drivers?

#DENOISER 3 CUDA WINDOWS#

(0 = minimum irradiation, 1 = maximu irradiation - as my application actually works only with irradiation and not with separate color channels)Īnd version of drivers where it was clearly possible to see differences in results on Windows were for example: 440.97 DCH vs. Is this expected to happen with different versions of drivers? If so is the difference between drivers caused by different default model passed to the optixDenoiserSetModel when data = NULL? And if so, is there some way how to store / extract the model used for one particular version of drivers so that I could pass it always to the optixDenoiserSetModel? Since in my application results of the pathtracing + denoising are used as an input to another calculation I would really prefer to have the results consistent / stable regardless of the version of drivers used if possible.įor the reference: input to the denoiser consist of grayscale images (R = G = B), and all channels are normalized to 0 … 1 interval Regardless if I used HDR or SDR or if I left hdrIntensity = 0, or calculated it with optixDenoiserComputeIntensity the results differed every time between different drivers - while the results without denoising were always identical. I first noticed this when I deployed code from Windows developer machine to Linux server machine, however after that I tried reinstalling couple of versions of drivers on the Windows machine and the results were still different. Greetings, I’ve been implementing CUDA 10.2 + Optix7 pahtracing program that also uses Optix7 denoiser and I’ve come along the following problem:Įach version of drivers I have tried so far produces different denoiser results.